What an EQ filter does is boost the gain (audio level) of a range of frequencies. (In fact, as you’ll see in all three examples, the control ranges are all numbered the same and we consistently use #2 and #4.) The two control points we are most interested in for this article are #2 and #4 which control frequencies at the bottom and top of human speech. A high-cut filter used for removing extreme high frequencies. Frequencies above human speech, used for brightening music and adding “air” Frequencies at the upper-end (treble) of human speech, we use this setting to improve clarity Frequencies in the middle of human speech Frequencies at the low-end (bass) of human speech, we use this setting to warm-up a voice Frequencies below human speech used for enhancing drums and bass guitar A low-cut filter used for removing deep rumbles

The seven white dots represent control points: The blue line represents the range of human hearing from 20 – 20,000 Hz (bass is ALWAYS on the left). To apply an EQ filter, select the track, then choose Effects > Filter and EQ > Parametric Equalizer. Here I’ve added a female narrator to my mix. And the tool we use to accomplish both these tasks is called an EQ filter ( EQ is shorthand for “equalization”). To improve clarity, we boost a range of higher frequencies. To “warm up” a voice, we boost a range of bass frequencies. Which gets me to the purpose of this article. That’s because the low-frequency sounds pass through the wall, but the high-frequency sounds do not. By boosting specific frequencies, we can make sure that our audience is better able to understand what’s being said. You can hear them talking, but you can’t understand what they are saying. A good analogy is listening to two people talk on the other side of a wall. This means that, while we can hear that someone is talking, when we can’t clearly hear high frequencies, it becomes difficult to understand the dialog. While both sounds are formed the same way, air squeezing between the tip of tongue and the roof of the mouth, if you can hear the hiss, the letter is an “S.” If you can’t, it’s an “F.”Īs we age, our ability to hear high-frequency sounds decreases. For example, the difference between the letter “S” – which has a hissing sound – and “F” – which lacks that hissing sound – is roughly 6,000 Hz in men and 8,000 Hz in women. Consonants, in contrast, are generally higher frequency sounds – they provide clarity to speech. When it comes to speech, vowels are low-frequency sounds – they lend the voice its richness, sexiness, and identity. So, while human hearing spans ten octaves, human speech only covers about five octaves. What this means is that each time the frequencies double, the pitch goes up an octave (for you music majors out there). You only need to compare the voices of James Earl Jones to Chris Colfer.)Īudio frequencies are logarithmic. (And, yes, there is LOTS of variation between individuals. For example, an adult male voice is roughly 200 – 6,000 Hz, while an adult female voice is roughly 400 – 8,000 Hz.

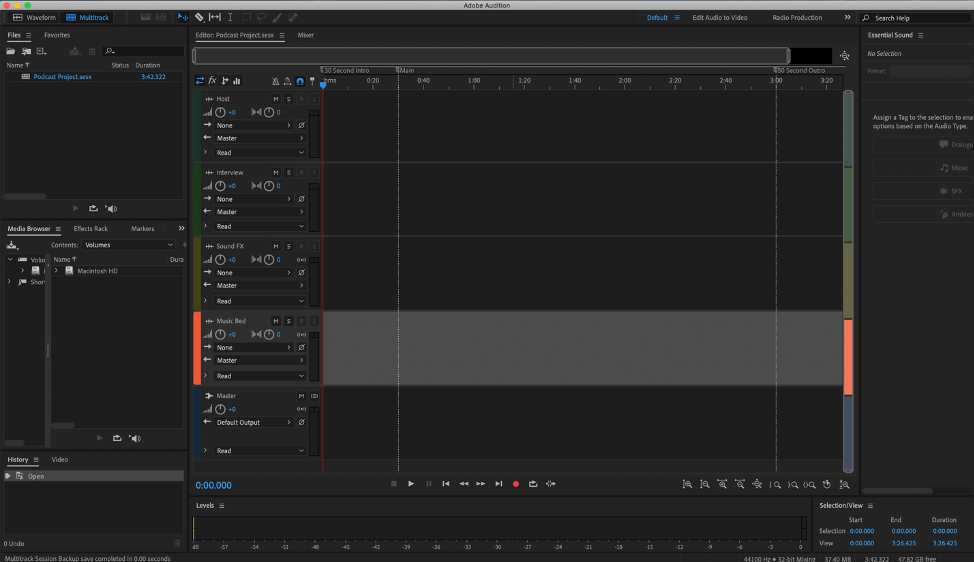

While our hearing encompasses this range, which we call “20 to 20K,” most of the sounds we hear only use a portion of it. Children can hear frequencies beyond this range, while older folks hear less. This range is typical for an 18-year-old adult. Normal human hearing is defined as a range of frequencies from 20 cycles (Hz) to 20,000 Hz. Whether we are listening to music, speech or noise, all human hearing is based on frequencies – the variations in the pitch of a sound – and volume. The first article covers Final Cut Pro X and the second article covers Adobe Premier Pro CC. NOTE: Since this article was released, I added two companion articles on boosting and smoothing audio levels. Once you understand how this technique works in one application you can use it anywhere, because all that changes is the interface. In this article, I’ll show you how to improve the sound of a voice using FCP X, Premiere Pro and Adobe Audition.

One of the sad facts of life is that as we get older, we lose the ability to hear high-frequency sounds, which means that it becomes harder to understand what people are saying. This is especially important when creating projects for older audiences who’s hearing may not be as good as you would like. However, I also use these techniques to warm up a voice or, more importantly, to improve the clarity of speech. Most of the time, we use this shaping capability to create ear-catching sound effects. Just as we can shape specific colors in our images to create a specific look, we can “shape” specific sounds in our audio to create a specific sound.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed